The

Art of the Quality Board Review: When Your Leadership Review Becomes the

Engine of Improvement — Not Just a Monthly Ritual

The Meeting That Changed

Everything

It was 9:07 AM on the first Tuesday of the month, and the conference

room on the second floor of a Tier 1 automotive supplier in Bratislava

was already filling up. Coffee cups lined the table. Slide decks glowed

on laptops. The quality director arrived last, as always, carrying a

printed dashboard that nobody had seen yet.

For three years, this monthly quality review had been what most

people expected: a recitation of scrap rates, a list of open customer

complaints, a nervous update on the latest audit findings, and a vague

promise to “do better next month.” People attended because they had to.

They left because it was over.

But this particular Tuesday was different. The quality director

didn’t open with the dashboard. He opened with a single defective part

sitting on the table — a stamped bracket with a crack running through

the mounting hole. And he asked a question nobody expected:

“Who can tell me the story of how this crack

happened?”

Silence. Then the production supervisor spoke up. Then the process

engineer. Then the shift leader. Within twenty minutes, the room wasn’t

looking at charts — it was reconstructing a narrative. They traced the

crack back to a tooling change three weeks earlier, a skipped die

maintenance step, a training gap on the night shift, and a

misinterpretation of the control plan.

By the end of that meeting, the team had identified the root cause,

assigned corrective actions with real owners and deadlines, and — most

importantly — they were engaged. For the first time in three

years, the quality board review wasn’t a report. It was a conversation.

It was a decision-making engine.

That’s what this article is about: transforming your quality board

review from a monthly obligation into the most powerful improvement

mechanism your organization has.

Why Most Quality Reviews

Fail

Let’s be honest. Most quality board reviews are broken. Not because

the people in them don’t care, but because the design of the

review itself works against its purpose. Here are the five most common

failure modes I’ve seen in twenty-five years of consulting:

1. The Data Dump

Someone — usually a quality engineer — spends three days preparing a

seventy-slide deck that covers every metric, every chart, every trend.

The meeting becomes a presentation, not a discussion. People sit

passively, nod occasionally, and leave without having made a single

decision.

The fix: Limit your review to no more than twelve

key indicators. If a metric doesn’t trigger a decision or an action, it

doesn’t belong in the board review. Park it in a weekly operational

report.

2. The Blame Game

When a defect rate spikes, the room fills with defensive energy.

“That was the supplier’s fault.” “The operators didn’t follow the

standard.” “Maintenance didn’t respond fast enough.” The review becomes

an exercise in attribution, not improvement.

The fix: Establish a ground rule — we discuss

systems, not individuals. Every defect is a system failure first.

The question is never “who messed up?” but “what in our system allowed

this to happen?”

3. The Rearview Mirror

Most reviews focus exclusively on what happened last month. Scrap was

2.3%. Customer complaints were four. PPM was 340. It’s all

backward-looking. Valuable, yes. But incomplete.

The fix: Dedicate at least 30% of your review time

to forward-looking indicators: upcoming changes, emerging risks,

preventive actions in progress, and leading indicators that predict

future quality performance.

4. The Action Item Graveyard

You know the pattern. Every month, the same corrective actions appear

on the list. “Retrain operators.” “Update work instruction.” “Improve

incoming inspection.” They get discussed, nodded at, and carried forward

to next month — unchanged and uncompleted.

The fix: Track action items with the same rigor you

track defects. Every action has an owner, a due date, and a verification

step. If an action is overdue, it’s escalated. If it’s completed, its

effectiveness is verified. No action lives beyond two review cycles

without a formal extension or escalation.

5. The Wrong People in the

Room

The quality review is attended by the quality department. That’s the

problem. Quality is not a department — it’s an outcome of every function

in the organization. When production, engineering, procurement, and

maintenance are absent, the review becomes an echo chamber.

The fix: The quality board review requires

cross-functional participation. Production leadership must be there.

Engineering must be there. Procurement must be there when supplier

quality is on the agenda. The meeting is not a quality department

meeting — it’s a business quality meeting.

Designing the Review

That Actually Works

After decades of sitting in hundreds of quality reviews across

automotive, electronics, medical devices, and heavy industry, I’ve

developed a structure that consistently produces results. It’s not

complicated. But it requires discipline.

The Four-Part Architecture

Part 1: Voice of the Customer (10 minutes)

Start with the customer. Always. Not with your internal metrics —

with the customer’s experience. Review: – Open customer complaints and

their status – Customer scorecards and ratings – Warranty trends –

Recent customer audits or visits – Any customer-initiated changes or

concerns

This sets the tone. It reminds everyone in the room that quality is

not an internal abstraction — it’s a promise made to someone who pays

for your product.

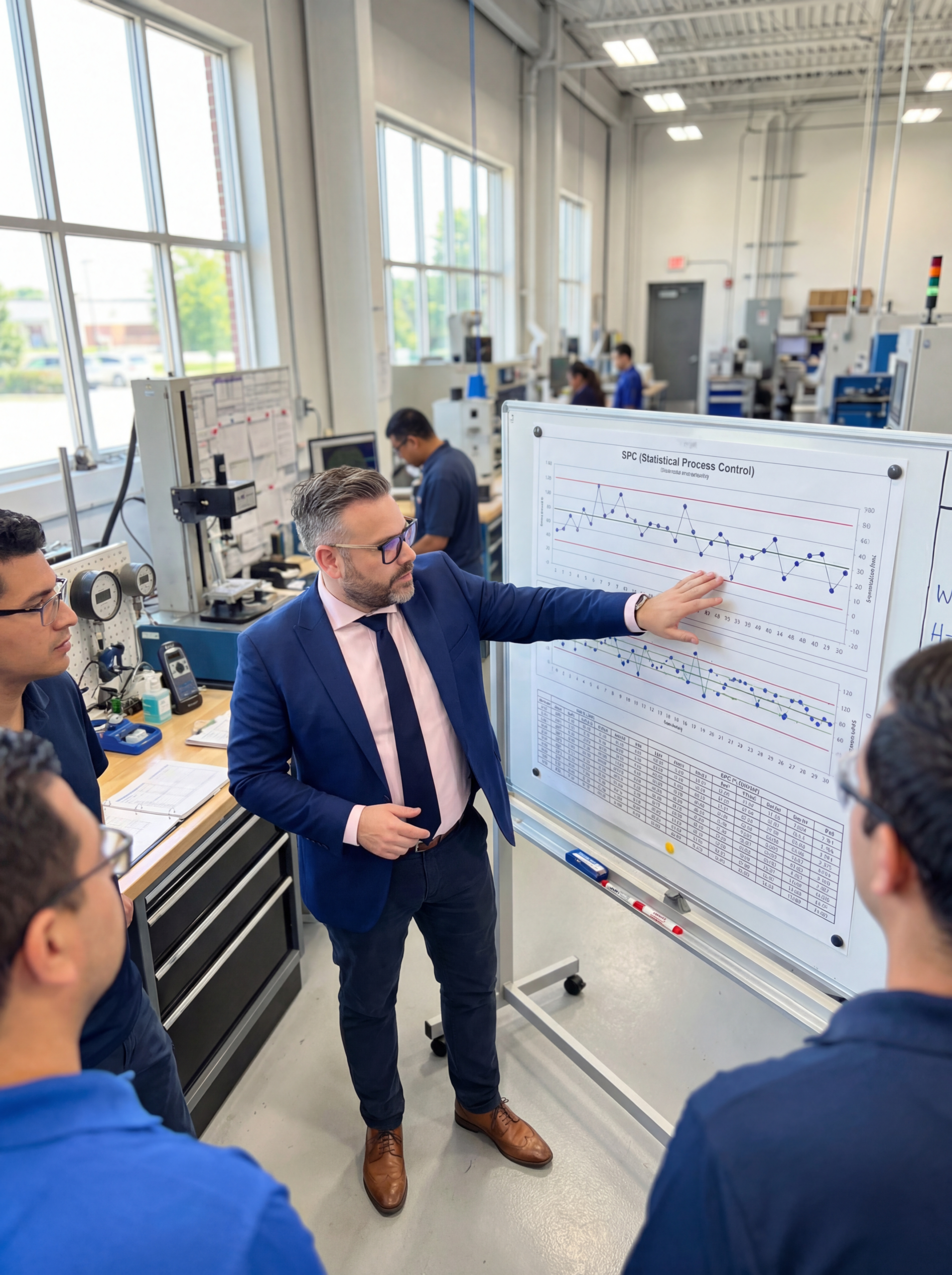

Part 2: Process Health Dashboard (15 minutes)

This is your balanced scorecard of quality indicators, covering: –

Output quality: First pass yield, scrap rate, rework

rate, PPM – Process stability: SPC signals, control

chart abnormalities, process capability indices (Cpk/Ppk) –

Prevention metrics: Open CAPAs, overdue corrective

actions, audit findings closure rate – Leading

indicators: Upcoming process changes, new product

introductions, supplier changes, training completion rates

Present the data visually. Use traffic light coloring

(green/yellow/red) so the team can instantly see where attention is

needed. But — and this is critical — don’t present every metric every

month. Highlight the deltas. What changed? What’s trending?

What needs discussion?

Part 3: Deep Dive — One Topic (25 minutes)

This is the heart of the review. Instead of superficially covering

twenty topics, go deep on one. Rotate the focus: – Month

1: A specific defect or failure mode — trace it from detection

to root cause to corrective action – Month 2: A process

change — review the change point management, risk assessment, and

validation results – Month 3: A supplier quality issue

— analyze the supply chain risk, containment actions, and supplier

development plan – Month 4: A systemic improvement

initiative — review progress, barriers, and next steps

The deep dive is where real learning happens. It’s where the team

constructs the narrative, identifies systemic gaps, and makes strategic

decisions.

Part 4: Action Review and Forward Look (10

minutes)

Close with: – Action item review: Status of all open

actions from previous reviews. No hiding. No deferring without reason. –

Risk radar: What’s coming in the next 30-60 days that

could impact quality? New product launches, personnel changes, equipment

overhauls, supplier transitions. – Decisions and

commitments: Every meeting ends with clear decisions

documented. Who does what by when.

The Role of the

Chair: Conductor, Not Presenter

The person leading the quality board review has an outsized influence

on its effectiveness. If they present, the room listens. If they

facilitate, the room thinks.

The best quality review chairs I’ve seen share these traits:

They ask more than they tell. Instead of presenting

the data and explaining what it means, they present the data and ask the

room: “What do we see here? What’s the story behind this trend?”

They protect the conversation from dominance. In

every room, there are loud voices and quiet ones. The chair’s job is to

pull insights from the quiet voices — often the people closest to the

process who have the most valuable perspective.

They enforce the discipline of the format. When

someone tries to turn the deep dive into a general complaint session,

the chair redirects. When an action item lacks an owner or a date, the

chair stops the conversation until it’s assigned.

They model the behavior they expect. If the chair

admits uncertainty, asks for help, and acknowledges when a previous

decision was wrong, the room learns that honesty is safe. That’s how you

build a quality culture — not through posters on the wall, but through

behaviors in the meeting.

The

Metrics That Matter: Building Your Quality Board Dashboard

Not all metrics deserve board-level attention. Here’s a framework for

selecting the right ones:

Tier 1: Always on the

Dashboard

- Customer complaints (open/closed trend)

- First Pass Yield (FPY) — the single most honest

metric of process health - Scrap cost — because money talks when charts

don’t - Open CAPA aging — overdue corrective actions are a

leading indicator of future problems - Process capability (Cpk) for critical

characteristics

Tier 2: Rotating / Contextual

- Supplier PPM — when supplier quality is a risk

theme - SPC out-of-control signals — when process stability

is under pressure - Audit finding closure rate — when compliance is a

focus area - Training completion — when new products or

processes are launching

Tier 3: Reported, Not

Reviewed

- Detailed inspection results, individual operator performance,

granular SPC data, individual gage R&R studies — these belong in

operational meetings, not board reviews.

The principle is simple: the board reviews what requires

cross-functional decisions. Everything else belongs in the

operational tier.

From

Meeting to System: The Quality Review as a Living Organism

Here’s what separates world-class organizations from the rest: their

quality board review isn’t an event — it’s a system. It

connects to everything.

Connected to Gemba: The best reviews include photos

from the shop floor. Not stock photos — real photos taken that week. A

defective part on the table is worth a thousand data points.

Connected to Strategy: The quality review doesn’t

exist in isolation. It’s linked to the organization’s strategic quality

objectives. If the strategic goal is to reduce customer complaints by

50%, the board review tracks progress toward that goal every month.

Connected to CAPA: Every corrective action discussed

in the review feeds back into the CAPA system. Every completed CAPA is

verified in the review. The two systems are intertwined.

Connected to People Development: The review is also

a teaching moment. When the team conducts a deep dive on a Weibull

analysis or a FMEA update, people learn. Over time, the review builds

organizational capability — not just compliance.

Connected to Recognition: World-class reviews

celebrate wins. When a team eliminates a chronic defect, reduces scrap

by 30%, or successfully launches a new process with zero customer impact

— that deserves acknowledgment. Not a generic “good job” from the

director, but a specific, genuine recognition of the people and the

process that made it happen.

A

Practical Checklist: Is Your Quality Review Working?

Ask yourself these questions after your next review:

If you can answer “yes” to all six, your quality board review is

doing its job. If not, you have a design problem — not a people

problem.

The Quiet Revolution

The transformation of a quality board review from a monthly reporting

ritual into an engine of continuous improvement doesn’t happen

overnight. It doesn’t require new software, a reorganization, or a

consulting engagement. It requires something harder: a change in how

leaders think about the meeting itself.

The review is not a place to report quality. It’s a place to

create quality. Every decision made in that room ripples out to

the shop floor, to the supply chain, to the customer. Every question

asked shapes the culture. Every action tracked — or not tracked — sends

a signal about what the organization truly values.

The quality director in that Bratislava conference room understood

something fundamental: the most powerful quality tool isn’t a

chart or a framework — it’s a well-run conversation among the right

people, focused on the right questions, at the right time.

That’s the art of the quality board review. And it’s available to any

organization willing to redesign the meeting they’re already having.

Peter Stasko is a Quality Architect with 25+ years of experience

in automotive, manufacturing, and industrial quality management. He

specializes in building quality systems that work in the real world —

not just on paper. His approach combines deep technical expertise with a

pragmatic understanding of how organizations actually learn and

improve.